In Part 1, I made the case that a CAPA is a system redesign request. Every incident reveals not one failure but several — and fixing only the obvious one leaves the rest wide open.

The question I didn't answer: how do you actually find all the places your system broke?

You can't just think harder. The problem with "think about what went wrong" is that your brain already has an answer. You walked into the room with a theory. Maybe you're right. But if your method for finding failure points is "whatever comes to mind," you will find exactly the failures you expected and miss the ones you didn't.

The tool that forces you to look systematically is the Ishikawa diagram — the fishbone. And the reason most people outside manufacturing can't use it is that nobody bothered to translate it.

Go to the ASQ page on fishbone diagrams. Read it. If you're not from manufacturing, I can almost guarantee you'll get stuck within two minutes.

The standard categories — Ishikawa's 6Ms — are Machine, Method, Material, Measurement, Manpower, Mother Nature. These make perfect sense if you're running a factory floor. They make almost no sense if you're investigating a coupon fraud, a service delivery failure, or a customer escalation.

I've watched smart operators stare at "Machine" and go blank. Not because they can't think. Because the label doesn't map to their world. And when the labels don't map, people do one of two things: they either skip the framework entirely and go back to gut feel, or they force-fit their thinking into categories they don't understand and produce garbage.

Neither is useful. The framework is powerful. The translation is missing. So here it is.

Equipment / Product / Systems — this is "Machine." In a services or tech context, it's your product, your tools, your CRM, your app, your internal systems. What did the system allow that it shouldn't have? What did it fail to block, flag, or restrict? If you're investigating a failure and you don't ask "what did the product or system do wrong," you're treating the system as a bystander. It's not. It's a participant.

Process / Method — the workflow, the SOP, the protocol. How is the work supposed to flow? Where are the handoffs? What are the steps, and which step broke? If no step broke — if the failure happened because there was no step — that's a finding too.

People — training, awareness, skill, intent. Did the person know what they were supposed to do? Were they trained? Were they monitored? Were there consequences for violations and were those consequences visible? This is the category most people jump to first. "Someone screwed up." The fishbone forces you to check five other categories before you settle on this one.

Materials — the inputs to the process. In manufacturing, this is raw material. In your world, it's the coupon itself, the test kit, the knowledge base article, the customer data — whatever the process operates on. Was the input designed with the right constraints? Did it have the right controls built in?

Measurement — the one people miss most. What instrumentation should have existed to catch this? What alerts, dashboards, reports, audits should have flagged this before it became an incident? If nothing in your measurement system raised a signal — or if it raised a signal and nobody looked — that's a failure point. Often the most important one.

Policy & Governance — this is where "Mother Nature" or "Environment" gets translated. What are the rules, the guardrails, the controls? Who approves what? What are the budget limits, the access controls, the maker-checker requirements? In manufacturing, environmental factors are things like humidity and temperature. In services and ops, the environment is your policy framework — the rules that constrain how people and systems operate.

Six categories. Six thinking hats. Each one forces you to look at the system from a different angle. The moment you skip one, you've created a blind spot.

Now watch how this works on a real case.

In Part 1, I described a fictitious coupon fraud. A B2B partner event, 300 delegates, each given a discount coupon. Months after the event, the coupon codes were still being used — by people who were never supposed to have them. They found ways to use them repeatedly. We discovered it not because any system flagged it, but because someone stumbled across a transaction that looked off.

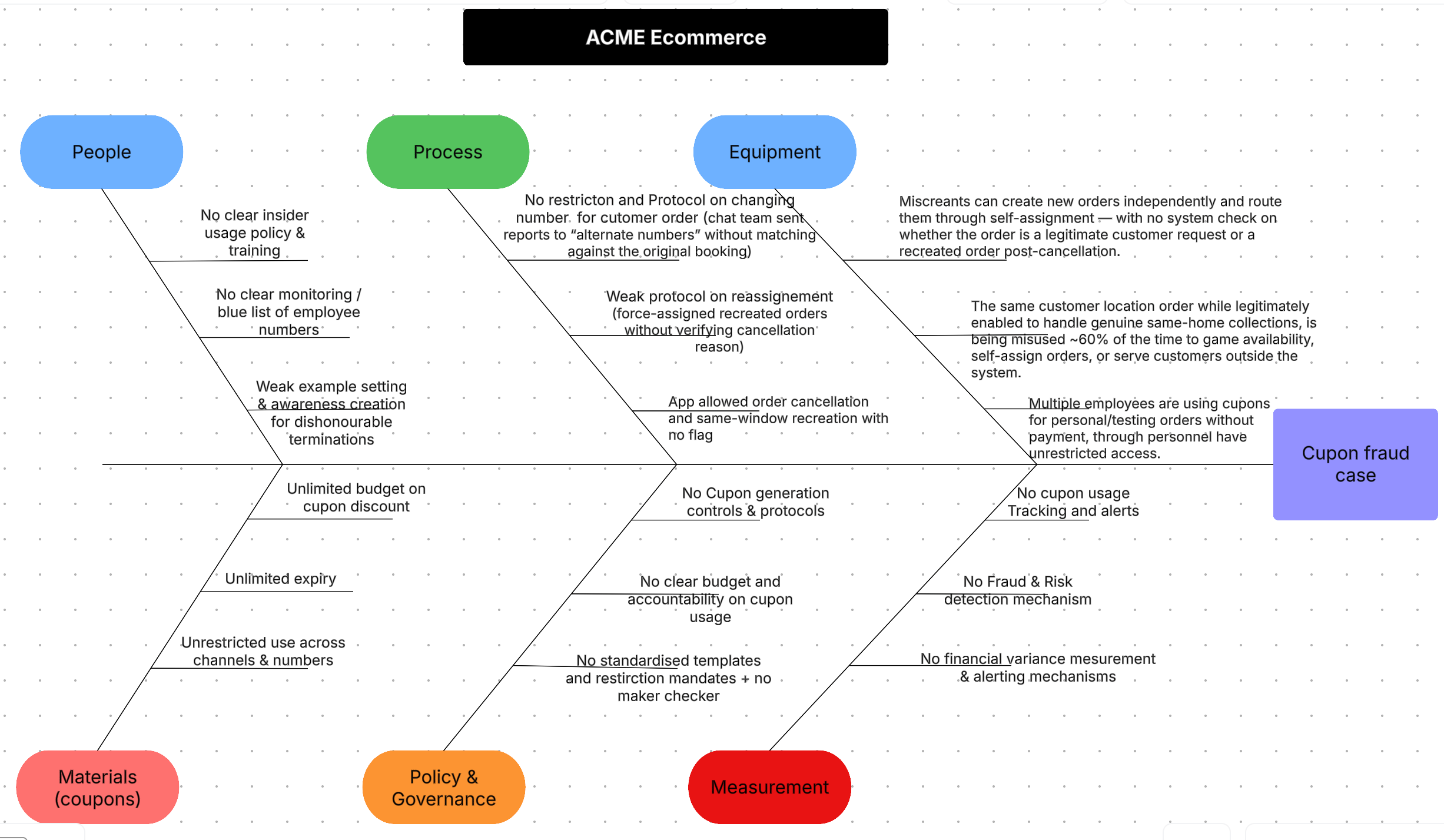

Here's the fishbone for that case.

Let me walk you through each rib — not the items on it, but how we thought about each category. The diagram shows the what. I want to show you the thinking.

Measurement. This is where I start, because it's where most people don't. The first question isn't "what went wrong." It's "why didn't we know something was going wrong?" If we'd had coupon usage tracking with alerts — tracking velocity, tracking usage per code, tracking usage against expected volume — we'd have caught this in week one. We had a fraud detection system, but it didn't pick up the signal. We had financial reporting that should have surfaced variance in discount spend against plan, but either it didn't flag it or nobody looked at the flag. Three measurement systems. None of them worked for this. That's three failure points before we've even looked at the coupon itself.

Policy & Governance. No coupon generation controls. No protocol requiring expiry dates, usage limits, and access restrictions as mandatory fields before a coupon is issued. No budget accountability on coupon usage — nobody owned the number. No maker-checker on issuance. No standardised templates that enforce guardrails by default. If your coupon issuance process allows someone to create a coupon with unlimited uses, no expiry, and no budget cap — and nobody has to approve it — you've designed for failure.

Equipment / Product. The system allowed new orders to be created and routed without checking whether the request was legitimate. A workaround existed — "Same Home Order" — that was legitimately needed for collections but was being misused roughly 60% of the time. The website allowed order cancellation and same-window recreation with no flag. The product wasn't a bystander. It was an enabler.

Process. No restriction on changing customer phone numbers on orders — which broke the "one number, one coupon" safeguard. Weak reassignment protocols allowed orders to be force-assigned to the perpetrators. If any single link in this chain had a protocol — restriction on number changes, restriction on same-window recreation, verification on reassignment — the fraud couldn't have continued. It needed all of them to be absent.

People. No clear insider usage policy. No monitoring or watchlist for employee orders. No visible examples of consequences for past violations. When you don't make examples of dishonourable behaviour, you're implicitly communicating that the risk of getting caught is low. People respond to incentives, including the absence of disincentives.

Materials. The coupon itself — unlimited discount, unlimited expiry, unrestricted use across channels and numbers. This is the category everyone jumps to first. "Fix the coupon." Yes, the coupon was poorly designed. But if you fix only the coupon and leave the other five ribs untouched, someone will find another way through. Guaranteed.

Count the failure points on that diagram. Eighteen. If you'd jumped straight to 5 Whys on "the coupon had no expiry" — which is the most obvious surface fix — you'd have found one root cause and written one corrective action. You'd have left seventeen holes open. And the next incident would have come through one of them.

That's why fishbone comes first. Not because it's a nice-to-have. Because without it, 5 Whys is almost guaranteed to lock onto the wrong thing — or more precisely, onto one right thing while missing seventeen other right things.

Now let me give you a second example to show this isn't a framework that only works on complex fraud cases.

Factory floor. Workers are not wearing safety equipment. Hard hats, gloves, goggles — whatever the site requires. The incident report says "workers not in compliance." The manager's instinct: "tell them to wear it."

Put that through the fishbone.

Equipment. Is the safety equipment comfortable? Is it the right size? Is it maintained? Has it degraded? I've seen sites where people take off hard hats because they give headaches after two hours. That's not a discipline problem. That's an equipment problem.

Measurement. How do you know whether 100% of people are wearing equipment 100% of the time? Are you auditing? How often? What happens when an audit finds a violation? If the answer is "we don't really check," then the measurement system failed before the person did.

Process. When someone enters the designated area, is there a physical check? Does someone verify they're wearing the right equipment and wearing it correctly? Is there a gate — a forcing function — that blocks entry without compliance? If not, you've made compliance optional regardless of what the policy says.

Policy & Governance. What are the penalties for non-compliance? Are they enforced? Are they visible? Has anyone been penalised recently enough that the workforce remembers?

People. Have they been trained on why the equipment matters — not just told to wear it? Have you shared stories of what happens when it fails? Do they understand the risk, or do they just know the rule?

Materials. Is the right equipment available in the right sizes at the right locations? If someone has to walk ten minutes to find a pair of gloves that fit, you've introduced friction that competes with compliance.

Same framework. Completely different domain. The ribs light up differently. But the thinking process is identical: look at every category, not just the obvious one.

Now here's what I want you to resist.

After you've completed this fishbone — after you've mapped all the failure points across all six categories — your brain will want to jump to solutions. You'll see "no coupon usage tracking" and immediately think "let's build a tracker." You'll see "no entry gate for safety compliance" and think "let's install one."

Hold that urge.

What you have right now is a map of everything that broke. But some of these are root causes. And some of these are symptoms of something deeper. You don't know which is which until you drill into each one.

"No coupon usage tracking" — that might be the root cause. Or the root cause might be that nobody owns coupon metrics. Or that the fraud detection team's scope explicitly excludes internal coupons. You won't know until you ask why.

"No entry gate" — maybe the root cause is budget. Maybe the root cause is that the safety team proposed it two years ago and was overruled. Maybe it's that no one has ever done a process audit of that particular zone. Each of these leads to a different fix.

Some failure points will stop at two Whys. The answer is obvious and the fix is straightforward. Some will go five levels deep and surprise you. The discipline is to do it systematically for each failure point — not to pick the two or three that feel obvious and skip the rest.

This is where intellectual honesty matters more than intellectual ability. The 5 Whys process itself is mechanical. Anyone can ask "why" five times. The hard part is resisting the solution you had in mind before you started. The hard part is staying in the problem long enough to let the system tell you what's actually broken, rather than confirming what you already believed.

Each step in this sequence exists because without it, a specific human tendency takes over. The fishbone defeats tunnel vision — it forces you to look at categories you'd skip. The 5 Whys defeats premature solutioning — it forces you to drill past the first plausible answer. Doing them in sequence defeats confirmation bias — it forces you to map before you dig.

Skip any step and you let the corresponding trap back in.

That's Part 2. You now have the tool that finds all the places your system broke.

In Part 3, I'll walk through how to take each of these failure points and run 5 Whys properly — the drilling that separates symptoms from root causes, and the common mistakes that make most 5 Why analyses shallow.

For now: take your last incident report. The one with one root cause and one corrective action. Run it through the six categories. Ask yourself: what did I miss?

For the formal origins of the fishbone diagram and its manufacturing roots, see the ASQ page on fishbone diagrams and the Wikipedia entry on Ishikawa diagrams.

~Discovering Turiya@work@life